` sections. - Uses: - Bullet lists - Table(s) - Key points - Occasional **bold text** - Varying lengths of sentences - Tone: Professional and **information-focused** (suitable for developers, marketers, and SEO auditors) - Designed especially for the **local tech and digital marketing ecosystem in Philippines** Here's the article ready in proper `` `body` format: ```html

Why Is Cloaking a Problem in Digital Marketing in the Philippines?

Cloaking is one of the more controversial yet increasingly encountered **SEO manipulation tactics**, especially among black-hat or grey-hat web operators in competitive local search niches like **online gambling**, **digital lending**, and e-commerce. What is cloaking? In SEO, **cloaking** is when a server shows different content or URLs to people than to automated search engine crawlers — often leading to a misleading search result or ranking abuse. For SEO specialists across major Philippine urban centers such as **Quezon City, Makati, Cebu**, or even Davao-focused businesses, this practice creates an untrustworthy user experience and can negatively affect organic reach if competitors manipulate visibility via cloaking strategies that violate Google’s Webmaster Guidelines. But how do you effectively catch them in action? Manual inspection is not enough; enter automation frameworks like **Selenium**, designed originally for browser-based application testing — now leveraged in ethical SEO research and competitor intelligence circles. Let’s uncover advanced detection methods next.Fundamentals of Selenium and Its Application for SEO Auditing

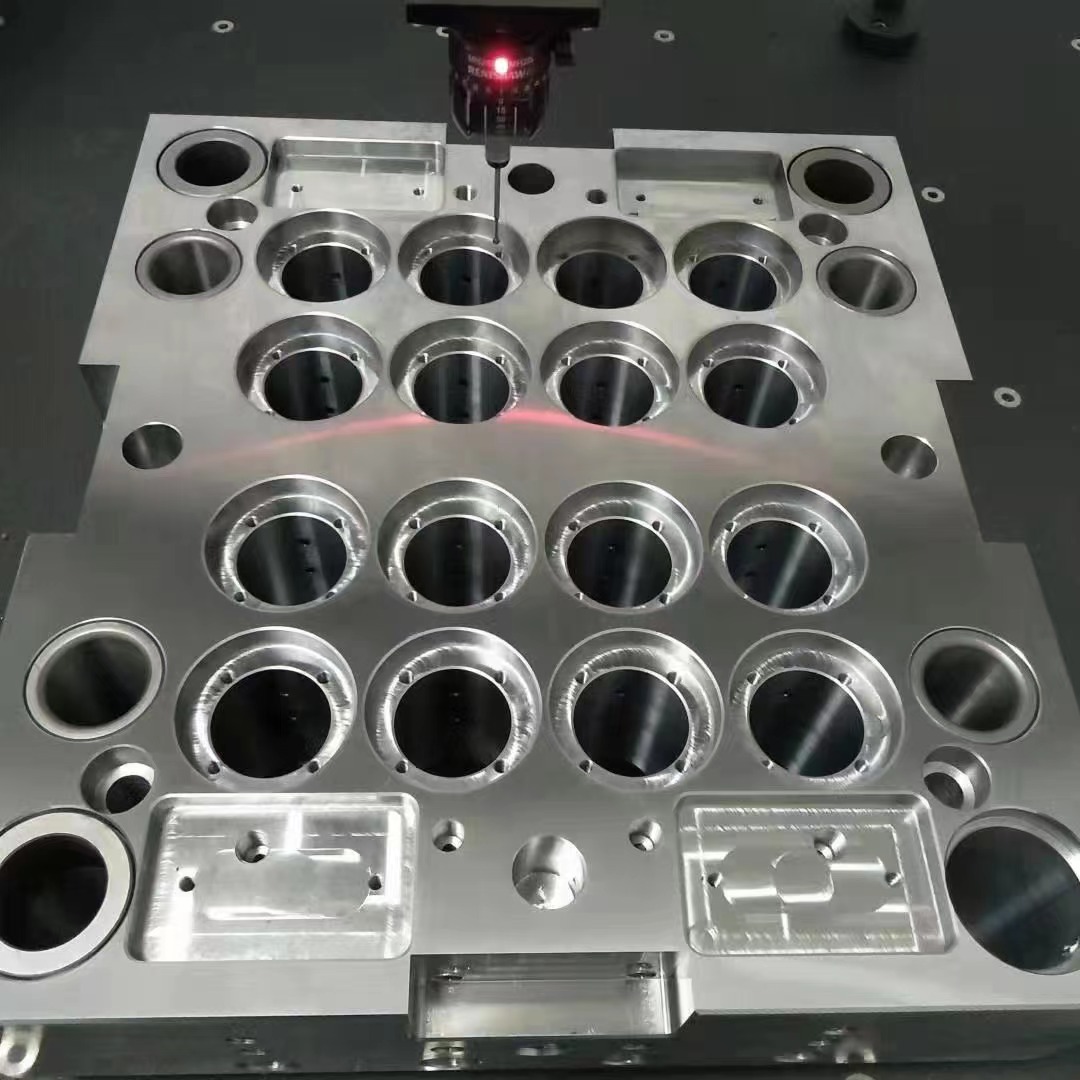

Selenium isn't built purely with SEO in mind — but here’s where tech creativity meets necessity. It allows for programmatically opening websites using real or headless browsers, detecting changes over sessions, inspecting DOM elements at runtime, simulating location/IP swaps through plugins, proxies or VMs — giving digital analysts deep visibility rarely available through conventional crawlers. This power comes from its architecture:- Selenium WebDriver

- Support for browser scripting across Chrome, Firefox, and Edge

- Real DOM execution mimicking actual human behavior — key for dynamic cloaking layers

| Tool | Can Handle Dynamic JavaScript Render | Detect Client-side Redirection? | Emulate Geo-location/IP Swaps Easily? |

|---|---|---|---|

| cURL / HTTP clients | No | No | No |

| Puppeteer (Node) | Yes | Yes | Sometimes |

| Python + Selenium | Yes | Yes | Customizable via Proxy Profiles |

Advanced Setup to Replicate Crawling vs Human Views with Proxies and Geolocation Swapping

Cloaked pages usually vary per geographical context, especially in regions serving distinct legal restrictions – like online gaming sites hiding adult services from certain zones including Metro Manila IP locations — and exposing alternate content in CDO, Clark, or Palawan users. To test whether the website returns the same page when visited directly vs being scraped, setup steps involve:- Select a target list of high-ranking domains you want monitored (e.g., casino, loan platforms in .PH TLD).

- Incorporate randomized geolocation swapping (Manila/CEBU/Cebu-based IPs via residential proxies preferred).

- Create separate Selenium driver session profiles (one for 'machine mode', another mimicking human browsing conditions with gestures enabled using PyDirectInput etc.)

- Capture and compare final rendered DOM using checksum hashes or similarity algorithms like cosine-similarity via spaCy.

- Mandatory Dependencies:

- - chromedriver (or geckodriver for Firefox)

- - selenium Python package

- - Requests library (for backend header logging purposes if necessary)

Analyzing Page Discrepancies: Detecting Hidden Redirect Patterns Through Code Profiling

While visual inspection of differences helps sometimes, seasoned manipulators hide cloaking using script-based redirections, lazy-load triggers after time-outs, and cookie-driven fallbacks — invisible unless actively searched for using code-level tools such as: - JavaScript hook interception during Selenium run - Performance.timing events extraction - Custom DOM mutation detection observers inside browser context - Realtime DOM string matching across render cycles A particularly effective workflow uses Python's logging and exception capture features in Selenium along with WebDriverWait conditions tied into JS error console tracking, as in: Code Example Below (Not Executable as-is in HTML):

from selenium.webdriver.common.by import By

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

# Simulated example snippet logic

def log_js_error(browser_driver):

logs = browser_driver.get_log("browser")

for log in logs:

print("JavaScript ERROR logged:")

print(log['message'])

...

Tackling Obfuscation Tricks Used With Anti-Bot Technologies

One critical issue with running open source tools like Selenium inside modern anti-scraping landscapes involves dealing with bot detection technologies baked into some Filipino market websites powered by cloud providers with anti-spider engines, such as Cloudflare, Imperva, or Radware systems. Common techniques employed:- Canvas Fingerprint Detection: Some websites check whether canvas rendering follows true device fingerprint norms.

- Nexus Between Automation and Headless Mode: If detected, they redirect to dummy versions, often cloaking intent or showing “Please solve reCAPTCHA" traps without clear explanation.

- Mouse movement profiling: Cloaking servers track subtle interactions impossible under basic Puppeteer/Selenium control sets (can be improved using libraries like pyppeteer or undetected-chromedriver wrappers however).

- Mitigation Options

-

Modify navigator properties via CDPM (Chrome Devtools Protocol) to mask automation origin:

Code Snippet Highlight (Non-runable):

```python options.add_experimental_option('excludeSwitches', ['enable-automation']) options.add_experimental_option('useAutomationExtension', False) browser = webdriver.Chrome(options=options) ``` - Introduce randomized idle periods before executing actions – mimics ‘organic’ delay times better.

- Employ **headful modes** instead of pure headless ones, albeit heavier but harder to fingerprint as robotic visits – relevant for accurate results when testing large-scale affiliate marketing blogs originating from the Bataan Freeport region onwards.